Wheel Factorization

May 8, 2009

Having discussed prime numbers in several previous exercises, we are now interested in the problem of factoring an integer n; for instance, the prime factors of 42 are 2, 3, and 7. A simple factoring method is to perform trial division by all the integers counting from 2 to the square root of n. Your first task is to write that function.

An easy optimization is to divide only by 2 and then by odd integers greater than 2, which saves half the work. A better optimization is to divide by 2, then 3, then 5, and thereafter to alternately add 2 and 4 to the trial divisors — 7, 11, 13, 17, 19, 23, and so on — since all prime numbers greater than 3 are of the form 6k±1 for some integer k.

It turns out that both those optimizations are special cases of a technique called wheel factorization. Consider a 2-wheel of circumference 2 rolling along a number line with a “spoke” at the number 1; if you start with the spoke at 3 on the number line, it will strike the number line at 5, then 7, and then every odd number after that. Or consider a 2,3-wheel of circumference 2×3=6 with spokes at the number 1 and 5; if you start with the 5-spoke at 5 on the number line, it will strike the number line at 7, 11, 13, 17, 19, 23, and so on. Or consider a 2,3,5-wheel of circumference 2×3×5=30 with spokes at 1, 7, 11, 13, 17, 19, 23 and 29 starting with the 29-spoke at 7. And so on: next is a 2,3,5,7-wheel, then a 2,3,5,7,11-wheel, and the sequence continues infinitely.

Wheel factorization works by performing trial division at each place where a spoke touches the number line. As the wheels grow larger, more and more of the trial divisors are prime, so less and less unnecessary work is done. Of course, there is a point of diminishing returns; when the wheel gets too large, it is just as much work to compute the wheel as to compute the list of primes, and costs just as much to store. But a small wheel is easy to compute, and not too big, and provides a simple optimization over naive trial division.

The spokes of the wheel are computed by looking for co-primes, which are those numbers for which the spoke has no factors in common with the circumference of the wheel; in other words, where the greatest common divisor of the spoke and the circumference is 1. For instance, a 2,3,5-wheel has a spoke at 17 because the greatest common divisor of 17 and 30 is 1, but no spoke at 18 because the greatest common divisor of 18 and 30 is 6. These numbers are called totatives; if you’re curious about the math behind them, ask your favorite search engine for information about Euler’s totient function.

It is easy to see this visually. Here is a list of the positive integers to 42, with primes highlighted:

1 2 3 4 5 6

7 8 9 10 11 12

13 14 15 16 17 18

19 20 21 22 23 24

25 26 27 28 29 30

31 32 33 34 35 36

37 38 39 40 41 42

After the first row, all the primes are in two columns, which correspond to the two spokes of a 2,3-wheel. If 853 were input to the 2,3-wheel factorization function, we would trial divide by 2, 3, 5, 7, 11, 13, 17, 19, 23, 25,and 29 before concluding that 853 was prime; note that 25 is not prime, but is relatively prime to the circumference of the wheel.

Your second task is to write a function that finds the factors of a given number using wheel factorization; you should compute and use a 2,3,5,7-wheel. What are the factors of 600851475143? When you are finished, you are welcome to read or run a suggested solution, or post your own solution or discuss the exercise in the comments below.

Priority Queues

May 5, 2009

A priority queue is a data structure in which items arrive randomly and depart according to an ordering predicate. It is similar to a normal queue, in which items depart in the order they arrive (first-in, first-out), and a stack, in which items depart in the opposite of the order in which they arrive (last-in, first-out). The operations on priority queues include insert to add a new item to the queue, find-first to return the first item in the queue, delete-first to return the remaining items after the first, and merge to merge two queues. Priority queues are used in simulations, where keys correspond to “event times” which must be processed in order, in job scheduling for computer systems, where more-important jobs must be performed beforeless-important jobs, and in many other applications.

There are many ways to implement priority queues. An unordered list makes it easy to insert new items, but each time an item is extracted the entire list must be scanned. An ordered list makes extraction quick but requires a scan of half the list, on average, each time an item is inserted. Binary trees give a total ordering of all the items in a priority queue, but we only need to be able to identify the first item, so they do more work than we need. We will implement priority queues using leftist heaps.

A heap is a binary tree in which each node precedes its two children in a total ordering; the ordering predicate may be less-than or greater-than, as appropriate for the particular heap. A leftist heap satisfies the additional criterion that the rank of each left node is greater than or equal to the rank of its right sibling, where the rank of a node is the length of its right spine. As a result, the right spine of any node is always the shortest path to an empty node. The name leftist heap derives from the fact that the left subtree is usually taller than the right subtree, so a drawing of a leftist heap tends to “lean” left.

The fundamental operation on leftist heaps is the merge of two leftist heaps. This is accomplished by merging their right spines in the same manner as merging two sorted lists; this preserves the heap-order property. Then the children of the nodes along that new path are swapped as necessary to preserve the leftist property.

Given merge, the remaining operations are trivial. Insert builds a singleton priority queue, then merges it to the existing priority queue. Find-first simply returns the item at the root of the tree. Delete-first merges the two children of the root.

Leftist heaps were invented by Clark Crane in 1972 and popularized by Donald Knuth in 1973.

Your task is to implement the priority queue data structure using leftist heaps. When you are finished, you are welcome to read or run a suggested solution, or to post your solution or discuss the exercise in the comments below.

Primality Checking

May 1, 2009

In a previous exercise you wrote a function that returned a list of prime numbers, and in another exercise you used that function to find a particular prime number. This exercise looks at prime numbers from a different perspective by considering a function that takes a number and determines if it is prime.

The algorithm that we will consider was developed initially by Gary Miller and refined by Michael Rabin, and is probabilistic in nature. It works like this: Express the odd number n to be factored as n = 2r s + 1 with s odd. Then choose a random integer a with 1 ≤ a ≤ n-1 and check if as ≡ 1 (mod n) or a2j s ≡ -1 (mod n) for some 0 ≤ j ≤ r-1. (Some browsers render that last equation poorly; it’s a raised to the power 2 to the j times s.) A prime number will pass the check for all a. A composite number will pass the check for about 1/4 of the possible as and fail the check for the remaining 3/4 of the possible as. Thus, to determine if a number n is prime, check multiple as; if k as are checked, this algorithm will err less than one time in 4k. Most primality checkers set k to somewhere between 25 and 50, making the chance of error very small.

Your task is to write a function that determines if an input number n is prime, then to determine if 289-1 is prime. When you are finished, you are welcome to read or run a suggested solution, or post your solution or discuss the exercise in the comments below.

Morse Code

April 28, 2009

Yesterday was the 218th anniversary of the birth of Samuel F. B. Morse, who invented the telegraph. We present this simple exercise in his honor.

Morse Code is an encoding of alphanumeric characters using short and long pulses of sound. Originally developed in the 1840s for the use of Morse’s telegraph, it is still in use today, primarily in the fields of amateur radio and aviation. Letters and digits consist of dots and dashes as shown in the chart at right; a single space is inserted between characters, and an extra space is inserted between words. For instance, “Programming Praxis” is rendered in Morse code as the string “• — — • • — • — — — — — • • — • • — — — — — • • — • — — • • — — • • — • • — — • • — • • • • •“.

| A • — | N — • | 1 • — — — — |

| B — • • • | O — — — | 2 • • — — — |

| C — • — • | P • — — • | 3 • • • — — |

| D — • • | Q — — • — | 4 • • • • — |

| E • | R • — • | 5 • • • • • |

| F • • — • | S • • • | 6 — • • • • |

| G — — • | T — | 7 — — • • • |

| H • • • • | U • • — | 8 — — — • • |

| I • • | V • • • — | 9 — — — — • |

| J • — — — | W • — — | 0 — — — — — |

| K — • — | X — • • — | |

| L • — • • | Y — • — — | |

| M — — | Z — — • • |

Your task is to write functions that convert back and forth between character strings and Morse code. When you are finished, you are welcome to read or run a suggested solution, or to post your solution or discuss the exercise in the comments below.

Word Hy-phen-a-tion By Com-pu-ter

April 24, 2009

In 1982, Frank Liang published in his doctoral dissertation a description of the hyphenation algorithm that he invented for Donald Knuth’s TeX typesetting program; his algorithm is the basis of most of the hyphenation programs used today. Liang’s algorithm is described in Appendix H of The TeXbook by Knuth. Liang’s algorithm never inserts a hyphen where one does not belong, but does miss some opportunities to insert hyphens where they do belong; Liang claims to find 95% of the hyphens in most texts. Liang’s algorithm is quick, and reasonably stingy with space.

Liang’s method works by pre-computing a list of hyphenating and inhibiting patterns based on a given hyphenation dictionary. First, a list of hyphenating patterns is established; for instance, -tion and c-c are good hyphenating patterns. But all the patterns have exceptions; for instance, the -tion pattern improperly hyphenates the word cat-ion. Thus, a set of inhibiting patterns prevents hypenations; in this case, the inhibition pattern .ca=t (the dot indicates the beginning or end of a word, the equal-sign inhibits a hyphen) overrides the hyphenation pattern and prevents the hyphen at ca-tion. Of course, there are exceptions to the inhibition patterns; Liang’s algorithm goes five levels deep — hyphenation, inhibition, hyphenation, inhibition, hyphenation — to get a good set of patterns, and even then requires an exception listing to fix a few words that would otherwise be hyphenated incorrectly. It is also required that a word must have a least five letters to be hyphenated, no hyphens can be inserted after the first letter or before the last letter of a word, and any word that includes non-alphabetic characters is never hyphenated.

Consider this example of hyphenating the word hyphenation: there are two hyphenating patterns 1na and 1tio, then three inhibiting patterns n2at, 2io, and he2n, then another hyphenating pattern hy3ph, another inhibiting pattern hena4, and finally another hyphenating pattern hen5at:

. h y p h e n a t i o n .

1n a

1t i o

n2a t

2i o

h e2n

h y3p h

h e n a4

h e n5a t

.0h0y3p0h0e2n5a4t2i0o0n0.

h y-p h e n-a t i o n

After all the patterns present in the word are identified, the highest number at any inter-letter position wins (we’ll call that number a rule), and hyphens are inserted at all the odd-numbered rules. In this case, the 1na pattern originally placed a hyphen at hyphe-nation, but the he2n pattern inhibited the hyphen, because rule 2 trumps rule 1; likewise, hena4 inhibited 1tio. Note that three patterns start at the fourth letter of hyphenation: he2n, hena4, and hen5at.

Liang’s hyphenation algorithm iterates through the letters of the input word, identifying all the patterns that are present in the word, and takes the maximum rule at each position. The task of identifying the patterns calls for some sort of search algorithm; Liang used a trie that he cleverly packed into an array.

Finally, Liang’s dissertation described a program patgen for pre-computing the hyphenating and inhibiting rules, for English or any other language, given a hyphenating dictionary. See his dissertation for details. The original lists of patterns and exceptions used by Liang for TeX 82 are shown on the next page.

Your task is to write a function that takes an input word and returns a list of suitable hyphenation points. When you are finished, you can read or run a suggested solution, or post your solution or discuss the exercise in the comments below.

Probabilistic Spell Checking

April 21, 2009

In a previous exercise we built a spell checker based on storing words in a trie. That spell checker was exact: the spell checker reported success if and only if the checked word was in the dictionary. Today we will build a spell checker that is probabilistic: it correctly reports failure if the checked word is not in the dictionary, and correctly reports success if the checked word is in the dictionary, but may also incorrectly report success even if the checked word is not in the dictionary. The probability of error can be made arbitrarily small, as determined by the programmer.

We will use a bloom filter, a data structure invented by Burton Bloom in 1970 to test membership is a set. A bloom filter consists of an array of m bits, plus k different hash functions that map set elements to the range 0 to m-1. All the bits are initially 0. To add an element, calculate the k hash values of the element, and set each kth bit to 1. To test if an element is in the set, calculate the k hash values of the element, return true if all of the kth bits are 1, and false if any is 0. In this way, it is certain that the element is not in the set if any hash returns 0, but it is possible that an element not in the set may be incorrectly reported as being in the set if all of the hashes return 1, but one of the hashes was set by some other element.

The easiest way to build a large number of hash functions is to use a single hash function and “salt” the dictionary words with an additional letter. For instance, to hash the word “hello” three times, use “ahelloa”, “bhellob”, and “chelloc” and hash with a standard string-hashing function.

There is some considerable math involved in determining the appropriate values of m and k. For a set of n elements, the probability p of a false positive is given by the formula:

To give this a sense of scale, storing a fifty thousand word dictionary in a bloom filter of a million bits using seven hash functions will result in a false positive every 5102 words, on average.

Your task is to build a probabilistic spell checker as described above. When you are finished, you can read or run a suggested solution, or post your solution or discuss the exercise in the comments below.

Spell Checking

April 17, 2009

Spell checkers are ubiquitous. Word processors have spell checkers, as do browser-based e-mail clients. They all work the same way: a dictionary is stored in some data structure, then each word of input is submitted to a search in the data structure, and those that fail are flagged as spelling errors. There is a certain art to building the word list on which a spell checker is based; Exxon isn’t a word in anybody’s dictionary, but it is likely included in the word list, but cwm (a geological structure), which is certainly a word, is most likely omitted from the word list, on the grounds it is more likely an error than a legitimate spelling (unless you are a geologist).

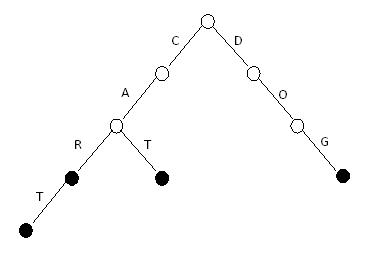

There are many appropriate data structures to store the word list, including a sorted array accessed via binary search, a hash table, or a bloom filter. In this exercise you are challenged to store the word list character-by-character in a trie. Consider this sample trie that stores the words CAR, CART, CAT and DOG:

To see that CAT is a valid spelling, follow the C branch, then the A branch, then the T branch, where you find a filled circle, indicating a word. To see that CAB is not a valid spelling, follow the C branch, then the A branch, and fail when there is no B branch.

Tries can be very fast; for instance, to see that QARTER (a misspelling of QUARTER) is not a word, follow the Q branch, then fail when there is no A branch. This is even faster than hashing, which must read all six letters of QARTER just to compute the hash value. And tries can also be space-efficient, since space is shared between common prefixes.

Your task is to build a spell checker based on tries. When you are finished, you can read or run a suggested solution, or post your solution or discuss the exercise in the comments below.

Google Treasure Hunt 2008 Puzzle 4

April 14, 2009

This puzzle comes from the 2008 Google Treasure Hunt:

Find the smallest number that can be expressed as the sum of 7 consecutive prime numbers, the sum of 17 consecutive prime numbers, the sum of 41 consecutive prime numbers, the sum of 541 consecutive prime numbers, and is itself a prime number.

For example, 41 is the smallest prime number that can be expressed as the sum of 3 consecutive primes (11 + 13 + 17 = 41) and the sum of 6 consecutive primes (2 + 3 + 5 + 7 + 11 + 13 = 41).

Your task is to find the number. When you are finished, you can read or run a suggested solution, or post your solution or discuss the exercise in the comments below.

Anagrams

April 10, 2009

Words that are formed from the same set of letters are anagrams of each other. For instance, pots, post, stop, spot, opts, and tops are anagrams.

Your task is to write a program that, given a dictionary and an input word, prints all the anagrams of the input word. You are also to determine the largest anagram class in your dictionary. When you are finished, you can read or run a suggested solution, or post your own solution or discuss the exercise in the comments below.

Flipping Pancakes

April 7, 2009

Pity the poor waiter:

The chef in our place is sloppy, and when he prepares a stack of pancakes they come out all different sizes. Therefore, when I deliver them to a customer, on the way to the table I rearrange them (so that the smallest winds up on top, and so on, down to the largest at the bottom) by grabbing several from the top and flipping them over, repeating this (varying the number I flip) as many times as necessary.

Your task is to write a function that sorts a list of unique positive integers into ascending order using the pancake-flipping algorithm. When you are finished, you can read or run a suggested solution, or post your solution or discuss the exercise in the comments below.